| Box-Cox Normality Plot | |

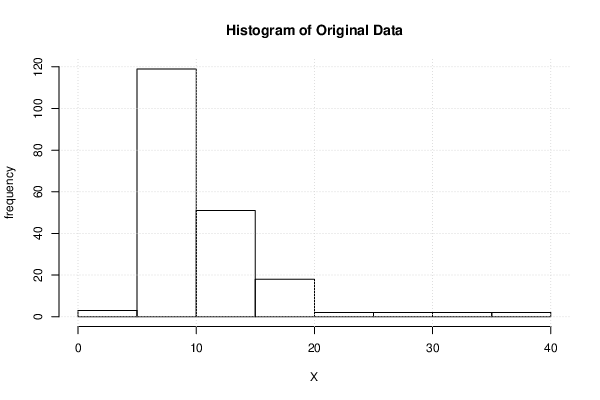

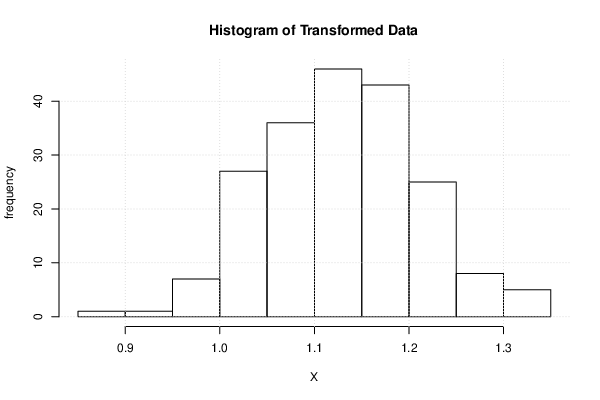

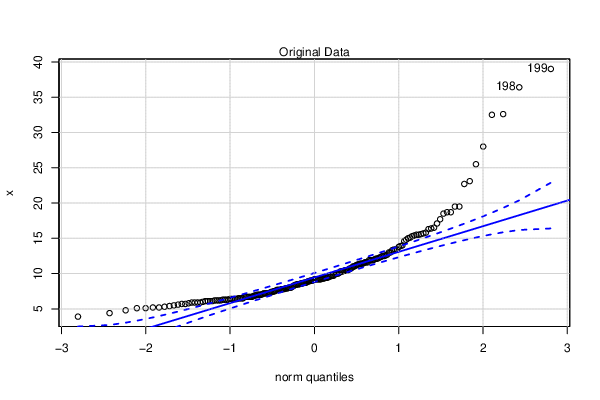

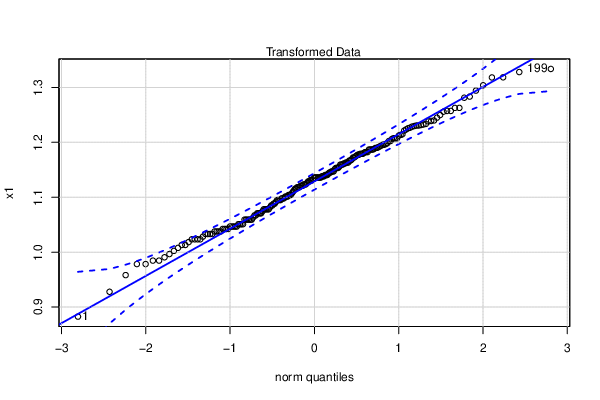

| # observations x | 199 |

| maximum correlation | 0.997876153103535 |

| optimal lambda | -0.69 |

| transformation formula | for all lambda <> 0 : T(Y) = (Y^lambda - 1) / lambda |

| Obs. | Original | Transformed |

| 1 | 3.9 | 0.882623486970309 |

| 2 | 4.4 | 0.92787832092391 |

| 3 | 4.8 | 0.958260706134117 |

| 4 | 5.1 | 0.9783766663759 |

| 5 | 5.1 | 0.9783766663759 |

| 6 | 5.2 | 0.984643913232501 |

| 7 | 5.2 | 0.984643913232501 |

| 8 | 5.3 | 0.990710726461036 |

| 9 | 5.4 | 0.996587119705227 |

| 10 | 5.5 | 1.00228243285757 |

| 11 | 5.6 | 1.00780538864538 |

| 12 | 5.7 | 1.0131641435525 |

| 13 | 5.7 | 1.0131641435525 |

| 14 | 5.8 | 1.01836633373166 |

| 15 | 5.9 | 1.02341911647723 |

| 16 | 5.9 | 1.02341911647723 |

| 17 | 5.9 | 1.02341911647723 |

| 18 | 5.9 | 1.02341911647723 |

| 19 | 6 | 1.02832920775448 |

| 20 | 6.1 | 1.03310291621917 |

| 21 | 6.1 | 1.03310291621917 |

| 22 | 6.1 | 1.03310291621917 |

| 23 | 6.1 | 1.03310291621917 |

| 24 | 6.2 | 1.03774617410694 |

| 25 | 6.2 | 1.03774617410694 |

| 26 | 6.2 | 1.03774617410694 |

| 27 | 6.2 | 1.03774617410694 |

| 28 | 6.3 | 1.04226456532598 |

| 29 | 6.3 | 1.04226456532598 |

| 30 | 6.3 | 1.04226456532598 |

| 31 | 6.3 | 1.04226456532598 |

| 32 | 6.4 | 1.04666335104575 |

| 33 | 6.4 | 1.04666335104575 |

| 34 | 6.4 | 1.04666335104575 |

| 35 | 6.4 | 1.04666335104575 |

| 36 | 6.4 | 1.04666335104575 |

| 37 | 6.5 | 1.05094749304037 |

| 38 | 6.5 | 1.05094749304037 |

| 39 | 6.5 | 1.05094749304037 |

| 40 | 6.5 | 1.05094749304037 |

| 41 | 6.7 | 1.0591903221148 |

| 42 | 6.7 | 1.0591903221148 |

| 43 | 6.7 | 1.0591903221148 |

| 44 | 6.7 | 1.0591903221148 |

| 45 | 6.7 | 1.0591903221148 |

| 46 | 6.7 | 1.0591903221148 |

| 47 | 6.8 | 1.06315761880257 |

| 48 | 6.9 | 1.06702752525234 |

| 49 | 6.9 | 1.06702752525234 |

| 50 | 7 | 1.07080379241206 |

| 51 | 7 | 1.07080379241206 |

| 52 | 7 | 1.07080379241206 |

| 53 | 7 | 1.07080379241206 |

| 54 | 7.1 | 1.07448997585471 |

| 55 | 7.2 | 1.07808944853263 |

| 56 | 7.2 | 1.07808944853263 |

| 57 | 7.2 | 1.07808944853263 |

| 58 | 7.2 | 1.07808944853263 |

| 59 | 7.2 | 1.07808944853263 |

| 60 | 7.3 | 1.08160541253556 |

| 61 | 7.4 | 1.08504090994265 |

| 62 | 7.4 | 1.08504090994265 |

| 63 | 7.5 | 1.08839883284951 |

| 64 | 7.5 | 1.08839883284951 |

| 65 | 7.6 | 1.09168193264285 |

| 66 | 7.7 | 1.0948928285885 |

| 67 | 7.7 | 1.0948928285885 |

| 68 | 7.7 | 1.0948928285885 |

| 69 | 7.7 | 1.0948928285885 |

| 70 | 7.8 | 1.09803401579162 |

| 71 | 7.8 | 1.09803401579162 |

| 72 | 7.8 | 1.09803401579162 |

| 73 | 7.9 | 1.10110787258238 |

| 74 | 7.9 | 1.10110787258238 |

| 75 | 7.9 | 1.10110787258238 |

| 76 | 8 | 1.10411666737539 |

| 77 | 8 | 1.10411666737539 |

| 78 | 8 | 1.10411666737539 |

| 79 | 8.1 | 1.10706256504626 |

| 80 | 8.2 | 1.10994763286494 |

| 81 | 8.3 | 1.11277384602138 |

| 82 | 8.4 | 1.11554309277634 |

| 83 | 8.4 | 1.11554309277634 |

| 84 | 8.5 | 1.1182571792667 |

| 85 | 8.5 | 1.1182571792667 |

| 86 | 8.5 | 1.1182571792667 |

| 87 | 8.6 | 1.1209178339922 |

| 88 | 8.6 | 1.1209178339922 |

| 89 | 8.6 | 1.1209178339922 |

| 90 | 8.7 | 1.12352671200832 |

| 91 | 8.7 | 1.12352671200832 |

| 92 | 8.7 | 1.12352671200832 |

| 93 | 8.8 | 1.12608539884749 |

| 94 | 8.9 | 1.12859541418917 |

| 95 | 8.9 | 1.12859541418917 |

| 96 | 9 | 1.13105821529751 |

| 97 | 9 | 1.13105821529751 |

| 98 | 9 | 1.13105821529751 |

| 99 | 9.1 | 1.1334752002437 |

| 100 | 9.2 | 1.13584771092866 |

| 101 | 9.2 | 1.13584771092866 |

| 102 | 9.2 | 1.13584771092866 |

| 103 | 9.2 | 1.13584771092866 |

| 104 | 9.2 | 1.13584771092866 |

| 105 | 9.2 | 1.13584771092866 |

| 106 | 9.2 | 1.13584771092866 |

| 107 | 9.3 | 1.13817703592049 |

| 108 | 9.3 | 1.13817703592049 |

| 109 | 9.3 | 1.13817703592049 |

| 110 | 9.4 | 1.14046441311988 |

| 111 | 9.4 | 1.14046441311988 |

| 112 | 9.4 | 1.14046441311988 |

| 113 | 9.5 | 1.1427110322656 |

| 114 | 9.6 | 1.14491803729129 |

| 115 | 9.6 | 1.14491803729129 |

| 116 | 9.7 | 1.1470865285437 |

| 117 | 9.7 | 1.1470865285437 |

| 118 | 9.7 | 1.1470865285437 |

| 119 | 9.9 | 1.15131216559674 |

| 120 | 10 | 1.15337131236674 |

| 121 | 10 | 1.15337131236674 |

| 122 | 10 | 1.15337131236674 |

| 123 | 10.1 | 1.15539595091101 |

| 124 | 10.3 | 1.1593453164731 |

| 125 | 10.3 | 1.1593453164731 |

| 126 | 10.3 | 1.1593453164731 |

| 127 | 10.4 | 1.16127176978577 |

| 128 | 10.4 | 1.16127176978577 |

| 129 | 10.5 | 1.16316717033628 |

| 130 | 10.5 | 1.16316717033628 |

| 131 | 10.5 | 1.16316717033628 |

| 132 | 10.7 | 1.16686794268634 |

| 133 | 10.7 | 1.16686794268634 |

| 134 | 10.8 | 1.16867481229887 |

| 135 | 11 | 1.17220507408313 |

| 136 | 11 | 1.17220507408313 |

| 137 | 11.1 | 1.17392981746649 |

| 138 | 11.2 | 1.17562849949142 |

| 139 | 11.3 | 1.17730174141348 |

| 140 | 11.3 | 1.17730174141348 |

| 141 | 11.4 | 1.17895014435163 |

| 142 | 11.4 | 1.17895014435163 |

| 143 | 11.4 | 1.17895014435163 |

| 144 | 11.5 | 1.18057429011052 |

| 145 | 11.6 | 1.18217474196246 |

| 146 | 11.6 | 1.18217474196246 |

| 147 | 11.6 | 1.18217474196246 |

| 148 | 11.9 | 1.18683930418653 |

| 149 | 11.9 | 1.18683930418653 |

| 150 | 11.9 | 1.18683930418653 |

| 151 | 11.9 | 1.18683930418653 |

| 152 | 12 | 1.18835026778227 |

| 153 | 12.1 | 1.18984010066748 |

| 154 | 12.1 | 1.18984010066748 |

| 155 | 12.2 | 1.19130926935274 |

| 156 | 12.3 | 1.19275822633551 |

| 157 | 12.4 | 1.19418741063088 |

| 158 | 12.5 | 1.19559724827799 |

| 159 | 12.5 | 1.19559724827799 |

| 160 | 12.6 | 1.19698815282367 |

| 161 | 12.7 | 1.19836052578432 |

| 162 | 13 | 1.20237029899644 |

| 163 | 13 | 1.20237029899644 |

| 164 | 13.3 | 1.20622667659008 |

| 165 | 13.4 | 1.20747964833286 |

| 166 | 13.4 | 1.20747964833286 |

| 167 | 13.4 | 1.20747964833286 |

| 168 | 13.8 | 1.21233756887066 |

| 169 | 13.9 | 1.21351505058515 |

| 170 | 14 | 1.21467830260812 |

| 171 | 14.6 | 1.22137372842758 |

| 172 | 14.8 | 1.22350323472193 |

| 173 | 15 | 1.22558465616364 |

| 174 | 15.1 | 1.22660787127897 |

| 175 | 15.3 | 1.22862033738538 |

| 176 | 15.4 | 1.22960998442415 |

| 177 | 15.5 | 1.23058883028516 |

| 178 | 15.5 | 1.23058883028516 |

| 179 | 15.6 | 1.23155706140547 |

| 180 | 15.7 | 1.23251485983593 |

| 181 | 15.8 | 1.23346240337148 |

| 182 | 16.3 | 1.23805223800049 |

| 183 | 16.4 | 1.23894176281543 |

| 184 | 16.5 | 1.23982216814964 |

| 185 | 17.1 | 1.24492115051123 |

| 186 | 17.7 | 1.24972645433962 |

| 187 | 18.5 | 1.25572124387725 |

| 188 | 18.7 | 1.25715199017738 |

| 189 | 18.7 | 1.25715199017738 |

| 190 | 19.5 | 1.26262577784434 |

| 191 | 19.5 | 1.26262577784434 |

| 192 | 22.7 | 1.28120425251733 |

| 193 | 23.1 | 1.28321780579988 |

| 194 | 25.5 | 1.29416593843619 |

| 195 | 28 | 1.30385947166563 |

| 196 | 32.5 | 1.31807005881621 |

| 197 | 32.6 | 1.31834789550753 |

| 198 | 36.4 | 1.32793900448384 |

| 199 | 39 | 1.33357989878712 |

| Maximum Likelihood Estimation of Lambda |

> summary(mypT)

bcPower Transformation to Normality

Est Power Rounded Pwr Wald Lwr Bnd Wald Upr Bnd

x -0.6811 -0.5 -0.9768 -0.3853

Likelihood ratio test that transformation parameter is equal to 0

(log transformation)

LRT df pval

LR test, lambda = (0) 22.25967 1 2.3816e-06

Likelihood ratio test that no transformation is needed

LRT df pval

LR test, lambda = (1) 152.3224 1 < 2.22e-16

|